At the 2026 AGIBOT Conference: Embodied AI moving into the deployment phase

The conversation around embodied AI (robots as a physical interface to artificial intelligence) is evolving. While in past years the focus was on whether robots could walk, perceive, and interact, the current question is whether these systems can work reliably enough to be integrated into real-world production.

At its 2026 partner conference, the robotics company AGIBOT Announced a shift towards an embodied AI “deployment phase”, leading the company to develop systems built for reliable, real-world performance. The focus was on a cohesive, layered strategy that integrated products, models, deployment technologies, and ecosystem infrastructure.

At a technical level, AGIBOT’s architecture is built around movement, interaction, and manipulation. The premise is that these capabilities cannot be considered separately if the robot is to operate in a real-world workflow. Movement enables access, interactions enable coordination, and task execution generates value. The company’s approach ties these together into an integrated stack spanning hardware, perception, control systems, operating systems, and embedded AI models, with the aim of reducing fragmentation and accelerating iteration across the entire system.

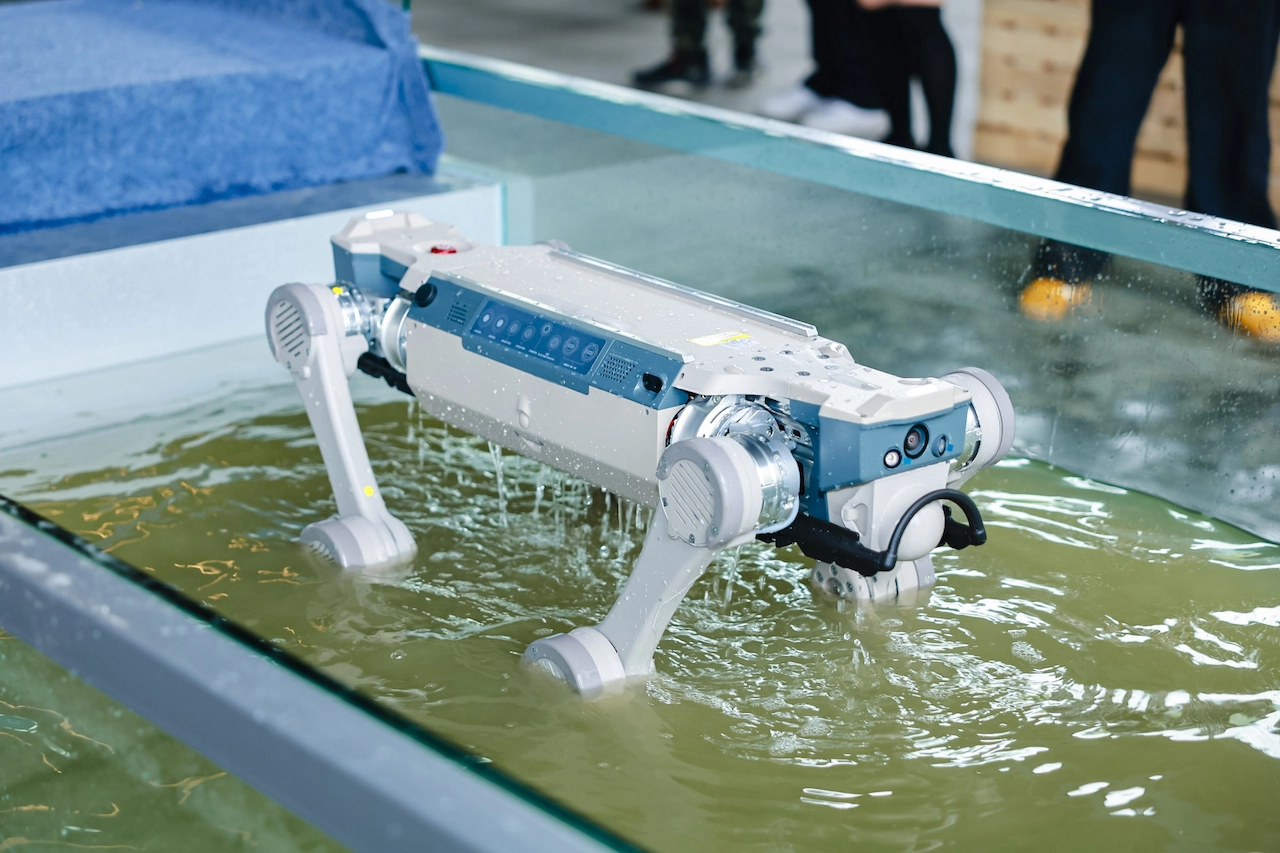

That integration is reflected in its current updated third-gen product lineup. AGIBOT has created a range of robotic products covering humanoid, wheeled and quadrupedal forms, each aligned with different operational environments. The situation matches the form factor of the task. Additionally, the company introduced six AI models aligned with three intelligence layers, including motion-control models, multimodal interaction systems, and task-oriented models designed to handle longer, more complex operations.

AGIBOT presented seven production solutions covering manufacturing, logistics, commercial services, inspection and cleaning, which are already working in a real environment. The distinction is important. These are not custom integrations, but rather standardized, repeatable solutions designed to scale.

To support that change, AGIBOT is building layers of infrastructure that extend beyond robots. Its AIMA (AI Machine Architecture) ecosystem aims to act as a full-stack development environment, lowering the barrier to deploying and customizing embedded AI systems. At the same time, the company introduced a massive data initiative and a global robot rental network, Sharebot, which allows partners to access robots as a service rather than ownership. This reduces upfront costs, accelerates adoption, and creates a continuous loop in which the deployment generates data, improves the data model, and the improved model feeds back into the deployment.

Behind all this is a clear attempt to define the direction of the industry. AGIBOT The “XYZ curve” was outlined as a framework for embodied AI development, with the last few years representing a phase where robots learned to walk, and the coming years focusing on whether they could consistently perform useful tasks. The company sees 2026 as the beginning of that change.

What APC 2026 ultimately presented was a system-level view of embodied AI. Robots do not work in isolation, and neither do the systems that support them. The result is what progress looks like, a system that can be deployed, replicated, and scaled. In this sense, the industry is entering a phase where we will finally be able to see Artificial Intelligence becoming readily available in the physical world.

Filed under: Aye, technology news

Disclosure: Some of our articles contain affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Know about us Disclosure Policy.